If there was ever proof that individuals are forming deep emotional reliance on ChatGPT, OpenAI’s new Trusted Contact function might be it.

Talking at Sequoia Capital’s AI Ascent occasion final Might, OpenAI CEO Sam Altman stated younger individuals have been utilizing ChatGPT like an working system for life — not simply for productiveness, however for main private selections.

“I imply, that stuff, I feel, is all cool and spectacular,” Altman stated. “And there’s this different factor the place, like, they don’t actually make life selections with out asking ChatGPT what they need to do.”

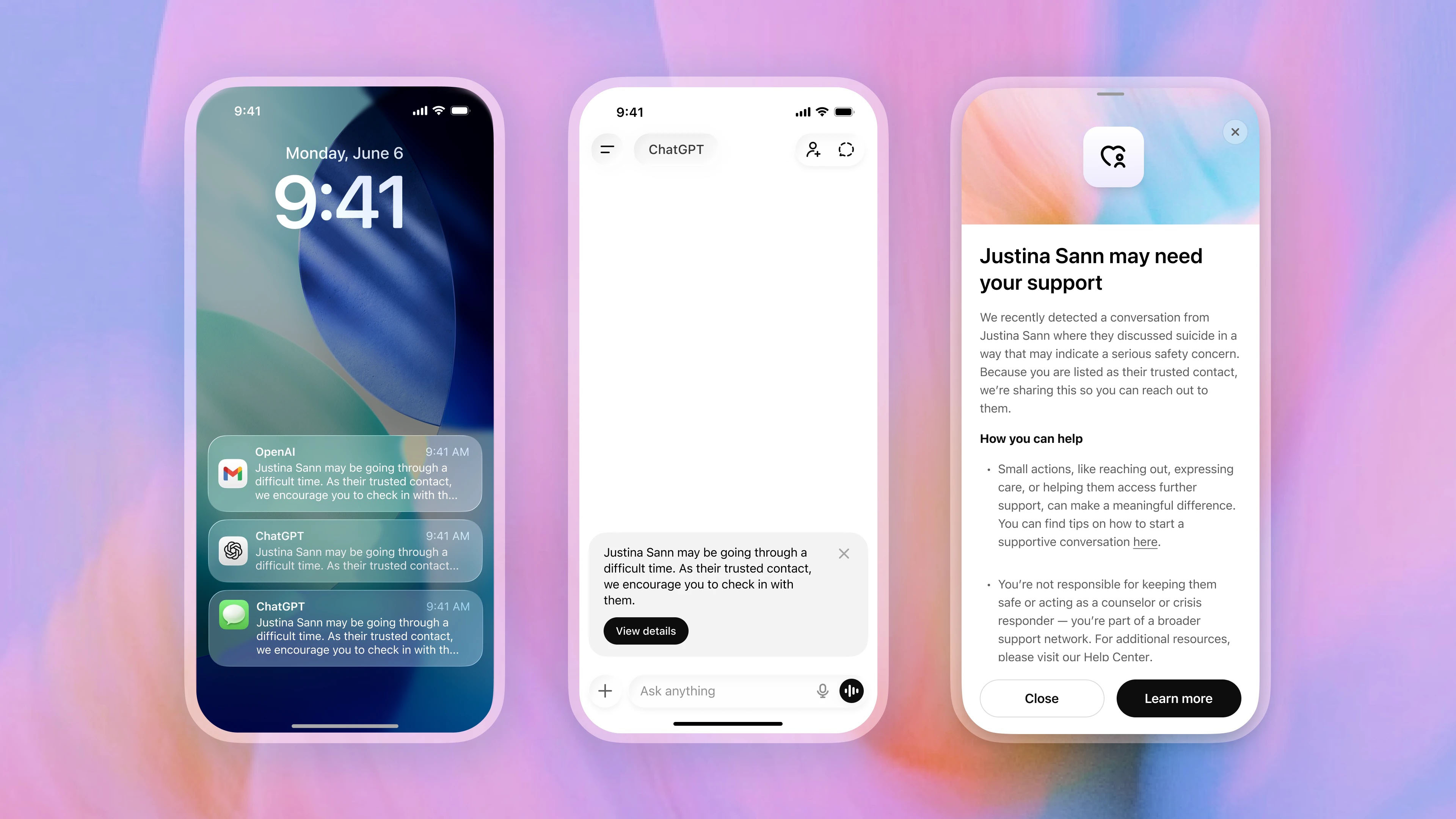

The function continues to be rolling out, so Trusted Contact shouldn’t be accessible to everyone but, however to search out it, you click on or faucet in your profile title in ChatGPT, then look in Settings. You can nominate a trusted grownup contact, who should settle for the function earlier than the function turns into energetic.

If ChatGPT’s automated techniques detect conversations which will point out a critical threat of self-harm, the consumer is warned that their Trusted Contact may very well be notified and inspired to achieve out themselves first.

A specifically educated human evaluation workforce then assesses the state of affairs earlier than any alert is shipped. If reviewers imagine there may be a real security concern, the Trusted Contact receives a notification by electronic mail, textual content, or in-app alert encouraging them to examine in.

OpenAI says the alerts don’t embrace chat transcripts or detailed dialog historical past in order to guard consumer privateness, and you can take away or change your Trusted Contact at any time.

Reassuring or unsettling?

OpenAI says Trusted Contact was developed with enter from mental-health specialists, suicide-prevention specialists, and a international community of greater than 260 medical doctors throughout 60 nations. Taken along with all of the parental controls that OpenAI has already launched and the security guardrails already in place, Trusted Contact is one other signal that the corporate is acknowledging that ChatGPT is one thing that can have an effect on customers emotionally, not simply technologically.

The latest product bulletins from OpenAI have actually performed down the usage of ChatGPT as a assured, and emphasised ChatGPT’s productiveness focus extra, significantly relating to the Codex device for creating code. But on the similar time, extra and extra security options aimed toward ChatGPT customers’ emotional well-being are being added.

The concept we’re now being monitored by ChatGPT can be regarding to some. When my colleague Becca Caddy not too long ago interviewed Amy Sutton from Freedom Counselling for an investigation into AI monitoring instruments in the office, she famous that understanding you’re being monitored by your AI, particularly in the office, might truly worsen the issue it’s making an attempt to resolve. Sutton commented, “With psychological well being stigmas nonetheless rife, AI statement would possible result in larger efforts to cover proof of struggles. This might create a harmful spiral, the place the larger our efforts to cover low temper or nervousness, the more severe it turns into.”

Whether or not Trusted Contact feels reassuring or unsettling in all probability is dependent upon how you already see AI and ChatGPT. However the function is one other instance of how AI firms acknowledge that their merchandise should not simply instruments for productiveness and data, however as techniques individuals could more and more depend on emotionally throughout among the most weak moments of their lives.

Comply with TechRadar on Google Information and add us as a most popular supply to get our knowledgeable information, opinions, and opinion in your feeds.

The perfect enterprise laptops for all budgets

Source link

#ChatGPT #alert #trust #thinks #youre #crisis #huge #shift #monitoring #lives