Sport Developer Deep Dives are an ongoing collection with the purpose of shedding mild on particular design, artwork, or technical options inside a online game in order to point out how seemingly easy, basic design choices aren’t actually that easy in any respect.

Earlier installments cowl subjects similar to cowl subjects similar to the sound design of Avatar: Frontiers of Pandora, how digicam results, sound FX, and VFX created a clean and excessive octane motion system in Echo Level Nova, and the technical course of behind bringing The Cycle: Frontier to Unreal Editor for Fortnite.

For practically a decade, Owlchemy Labs has explored what it means to the touch the digital world. From Cosmonius Excessive’s alien excessive hijinx, to Job Simulator’s bodily comedy, to Trip Simulator’s playful expressiveness, each sport superior our studio’s mission: make VR interactions so pure that gamers neglect the interface totally.

Dimensional Double Shift (DDS) represents the following step in that evolution: a full dedication at hand monitoring. The result’s a brand new design language grounded in embodiment, iteration, and accessibility.

“Shifting from a controller, the place persons are used to urgent A or B or pulling a set off, introduces a number of new elements,” stated Alex Covert, Lead Gameplay Engineer. “Each time we design a brand new equipment for a dimension, we ask: How will the participant really seize this?”

Professional System Engineer, Marc Huet, describes this shift as each philosophical and technical. “Our purpose was to make palms really feel current and plausible—to attenuate dissonance between what you see and what you really feel,” he defined. “That meant at all times protecting palms seen, supporting many grip sorts, and making certain the digital hand by no means broke immersion.”

The Problem

1. Classes Discovered from Early Failures

Early prototypes uncovered the hidden complexity of human movement. “We had points with hand sizing,” Covert defined. “Individuals felt uncomfortable utilizing a large hand that did not match their very own.”

The repair wasn’t a player-facing slider. The system now scales the digital palms to match the participant’s tracked hand measurement routinely—a type of invisible modifications that quietly removes discomfort and makes the whole lot really feel extra ‘you.’ Small change, large consolation wins. It grew to become a shorthand lesson internally: one of the best UX enhancements are sometimes those no one notices as a result of nothing feels flawed anymore.

Generally, the obstacles weren’t ergonomic however systemic. “We realized {that a} sock-puppet prototype conflicted with Meta’s hand-tracking system,” Covert recalled.

The issue was much less technical than behavioral. The very first thing most individuals do with a puppet is discuss to themselves. In observe, that movement turned out to be similar to Meta’s system menu gesture. As a substitute of animating a personality, gamers had been repeatedly summoning the OS.

There was no intelligent workaround. The gesture belonged to the platform. So the staff did not redesign the interaction — they lower puppets.

Huet’s early experimentation echoed these constraints. “At first, our seize detection was binary—you had been both grabbing or not,” he stated. “It labored for Trip Simulator, nevertheless it did not really feel alive. We wished to see your fingers react, your palms exist in the world.”

The staff’s first step towards that purpose was rebuilding their “seize logic” from the bottom up. Huet described the method as discovering simply how unpredictable actual human movement is: “There are such a lot of legitimate methods to seize one thing—we needed to determine which of these appeared proper in VR.”

Picture through Owlchemy Labs/Google

2. Pure vs. Intuitive

By a whole lot of hours of playtesting, the staff realized that pure and intuitive do not at all times align.

“Each particular person does it otherwise,” Covert stated. “Some pinch, some seize. The nearer we get to how individuals really use their palms, the much less now we have to clarify.”

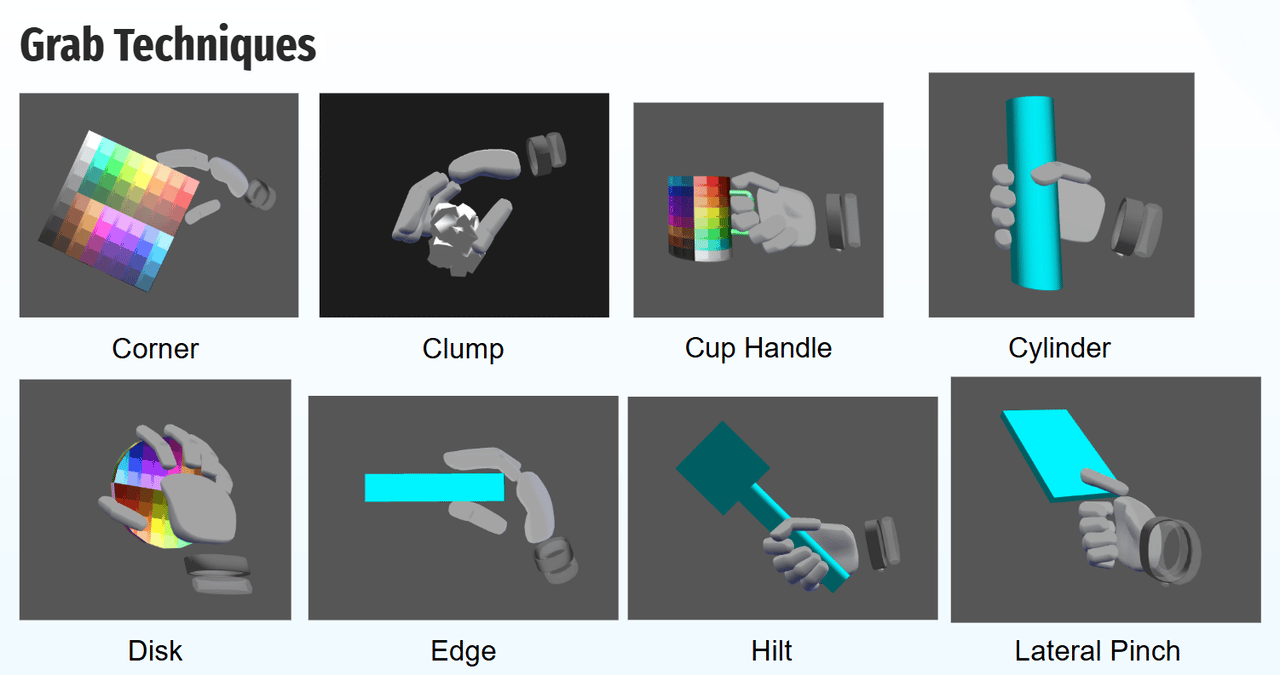

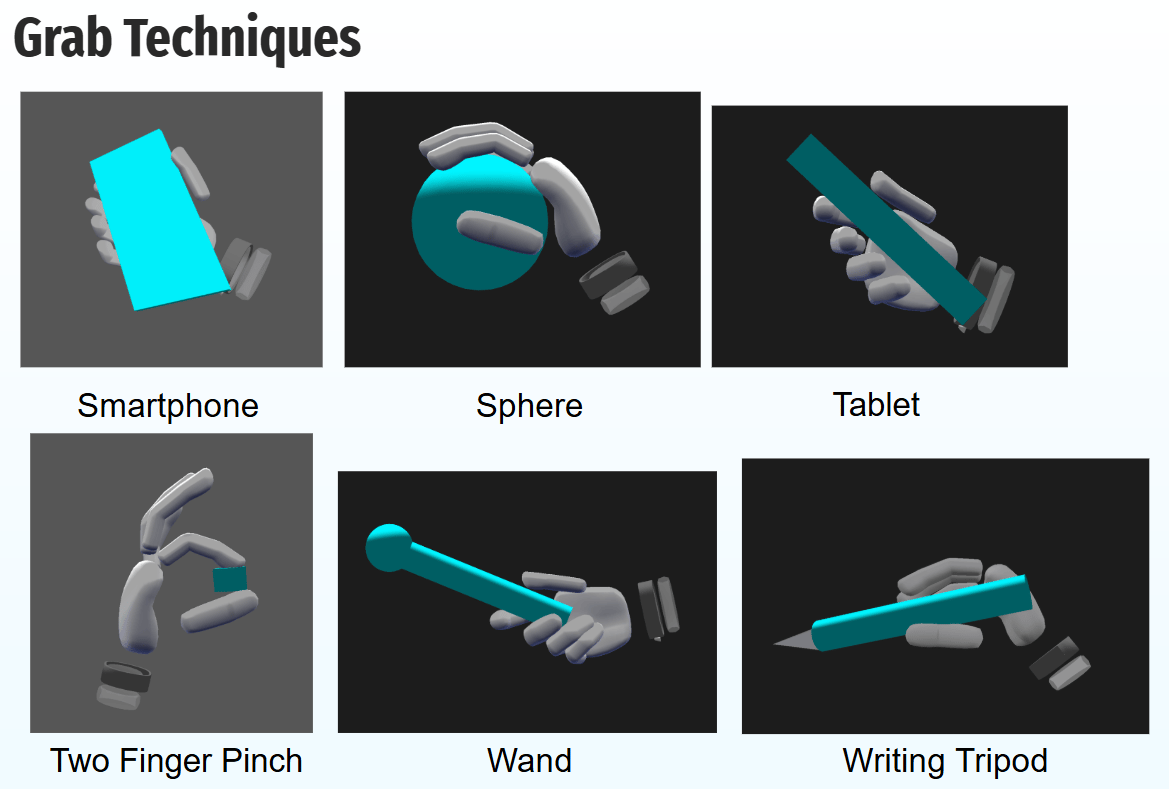

Huet’s analysis helped formalize that variability. Drawing from human-factors analysis on on a regular basis greedy—similar to a 2016 IEEE research by robotics researchers Tomáš Feix and colleagues that categorizes frequent human grips primarily based on type and intent—Huet constructed a library of seize strategies for VR. This consists of grips like nook, clump, cylinder, hilt, and wand—every mapped to real-world use.

“We checked out how individuals maintain cups, telephones, and instruments,” he defined. “Each grip has a narrative—a context—and we wished that mirrored in VR.” He additionally coined inner phrases like “closedness,” a 0-to-1 measure of how open or closed the participant’s hand is to outline when a seize happens.

Picture through Owlchemy Labs/Google

“Some gamers over-grab, some pose-match,” Huet stated. “It’s a must to steadiness each so issues do not feel sticky or slippery. It is refined math that finally ends up shaping how individuals play.”

This systematization allowed the staff to maneuver past imitation towards consistency. “It is fascinating analysis,” Covert famous. “Marc confirmed photos of various hand poses individuals use when grabbing issues — holding a telephone versus holding a cup. It grounded our method.”

Picture through Owlchemy Labs/Google

The Strategy

1. Designing for Accessibility By Simplicity

Eradicating controllers did not simply change the interface — it opened the door for extra inclusive play.

“One in all our large concerns is one-handed gamers,” stated Covert. “At one level, we had a two-handed twist-style pepper shaker, however that created accessibility issues. So we added a shake various.”

This philosophy carried by each mechanic. DDS launched “snap factors” for setting gadgets down simply, releasing a participant’s hand mid-task.

“Within the diner, the squeeze bottles have a threshold for the way exhausting you want to squeeze,” stated Emma Atkinson, Technical Designer. “But when that is troublesome, you may simply tilt them they usually’ll pour out.”

Shifting at hand monitoring additionally meant eradicating pointless instruction. As a substitute of instructing gamers new button metaphors or gesture guidelines, the staff centered on subtracting friction and trusting current human instinct. As a result of individuals already know find out how to use their palms, many interactions may very well be realized just by doing—with out prompts, overlays, or step-by-step tutorials.

Huet prolonged that philosophy to invisible design programs. “Accessibility is not at all times about menus or toggles,” he stated. “Generally it is about thresholds—if a participant’s vary of movement is restricted, we are able to scale the sensitivity so a smaller gesture nonetheless counts. The purpose is to let everybody really feel succesful in VR.”

That sensitivity scaling, initially constructed for testing, grew to become a refined but highly effective accessibility characteristic. “You do not have to announce accessibility,” Huet added. “You may simply design it in.”

2. Creating Self-Haptics

With out vibration motors or triggers, the staff reimagined tactile suggestions from the bottom up.

“The squishable UI buttons and keyboards give tactile suggestions as a result of your fingers contact one another,” Covert defined. “That is what we name self-haptics. You are feeling your self performing the motion as a substitute of counting on vibration.”

Atkinson added, “You do not need your hand to turn out to be the thing—you need to maintain it. We attempt to reduce cognitive dissonance between what you see and what you’re feeling.”

Huet constructed on that concept technically, integrating collision physics that made contact really feel grounded. “The uncooked hand information comes straight from the machine,” he defined. “We then run physics updates to see the place your digital hand ought to be after influence. There’s a bit of tolerance—sufficient to offer you that ‘hit’ feeling earlier than it breaks by.”

He described it as “letting physics faux haptics.” “You are not feeling a vibration,” he stated, “however when the digital hand resists or stops, your mind fills in the suggestions.”

That philosophy—turning embodiment itself into suggestions—grew to become core to Owlchemy’s design lexicon, alongside “bubble go,” the intuitive hand-to-hand object switch system.

3. Designing for Shared Gestures

Early builds tried to make passing specific: each gamers needed to carry out a synchronized ‘handoff’ gesture. The staff changed it with what grew to become the ‘bubble go’—an thought from Tim Winsky, carried out by Methods Engineer Marc Huet—the place a thrown merchandise hovers in entrance of the opposite participant lengthy sufficient to be grabbed. The result’s extra playful, and it teaches itself.

Even throughout inner playtests, individuals found the brand new go system and had that second of pleasure once they figured it out on their very own.

The change was data-driven. “We added analytics to see how usually gamers used the outdated passing system and located that nearly nobody was utilizing it,” Covert stated. “That informed us it was time to rethink the design.”

Huet’s seize system performed an unseen position right here too. “If you toss one thing, timing issues; milliseconds have an effect on how actual it feels,” he stated. “So we constructed in what we name ‘sticky’ and ‘slippery’ launch thresholds. It is the way you inform the distinction between handing off a mug and throwing a seaside ball.”

His experiments revealed that velocity and openness want to answer intent. “When you open your hand slowly, the thing ought to linger. When you open quick, it ought to fly,” Huet defined. “These little cues make shared gestures really feel plausible.” It additionally learns what ‘I am prepared’ appears like: hand up, ready. When it sees that, it helps the thing meet the hand—so catching feels intentional as a substitute of unintentional.

4. Designing Round Technical Limits

{Hardware} realities nonetheless current inventive challenges. “If you level away from your self with the sprayer, your hand can block the headset cameras, so it does not at all times detect the movement,” Covert defined.

As a substitute of seeing such limitations as setbacks, the staff treats them as alternatives for innovation. “We have even talked about including aerosol spray cans the place you press the highest together with your index finger,” Covert added. “We do not have assist for that but, nevertheless it’s on the wishlist.”

Huet elaborated on these constraints: “Hand occlusion, lighting, monitoring velocity—these are fixed battles,” he stated. “If a hand strikes too quick or leaves the digicam’s subject of view, monitoring breaks. So we design round that by protecting actions shut, inside this invisible field in entrance of you.”

Environmental situations additionally form interaction design. Hand monitoring behaves otherwise relying on lighting, digicam visibility, and the underlying capabilities of the headset itself. In low-light or high-occlusion conditions, monitoring information can turn out to be noisy.

Why?

Properly, not all programs compensate for that noise in the identical manner. Some programs embody infrared illuminators to assist hand monitoring in low-light situations, whereas others degrade sooner as lighting drops.

Relatively than treating these moments as failure states, the staff designed interactions to be forgiving by default: dropped objects get better, missed grabs self-correct, and small errors by no means cascade into frustration. The tenet was easy: Gamers ought to by no means really feel punished by physics or {hardware} constraints.

The Outcomes

Hand monitoring did not simply broaden accessibility. It made play really feel human.

Gamers naturally gestured, waved, and improvised collectively. They realized by doing, not by studying.

“We nearly needn’t design new methods of doing issues,” Covert stated. “The nearer we get to how individuals really use their palms, the extra pure the sport feels.”

Huet added, “Our success is when individuals cease noticing the system. When your digital hand looks like your hand—when you do not take into consideration grabbing, you simply seize—that is when VR disappears and embodiment takes over.”

Throughout growth, the staff’s iterative design language. Phrases like self-haptics and bubble go grew to become shorthand for a tradition of experimentation and discovery. “You might nearly make a dictionary of the phrases we have made up,” Atkinson laughed. “It is our personal language.”

For Owlchemy Labs, hand monitoring reaffirmed its core philosophy: interactions needs to be instinctive, inclusive, and joyful.

“We’re nonetheless enhancing our authoring instruments,” Huet stated. “However each iteration teaches us one thing new about how people transfer—and find out how to make VR transfer with them.”

Picture through Owlchemy Labs/Google

Key Takeaways

-

Hand monitoring is each interface and embodiment. Designing gestures means understanding how individuals really feel suggestions: not simply how they carry out it.

-

Authoring meets procedural movement. Sturdy hand-tracking programs permit builders to lock poses when precision issues (i.e., selecting up a cup a technique) whereas nonetheless permitting movement to adapt to how gamers really attain, slide, and settle right into a seize. The combination of authorship and procedurality is a key facet of our system many builders miss. They attempt to exhausting to do one or the opposite

-

Accessibility improves when interaction simplifies. Eradicating {hardware} usually clarifies moderately than complicates the expertise.

-

Iteration is the fixed. Each friction level (hand measurement, gesture mismatch, or digicam occlusion) drives higher design.

-

Analytics shut the loop. Remark, information, and playtesting inform each refinement.

-

VR’s future is hands-on. Interaction and embodiment are not separate disciplines. They’re one and the identical.

Source link

#Rethinking #interaction #design #Dimensional #Double #Shift