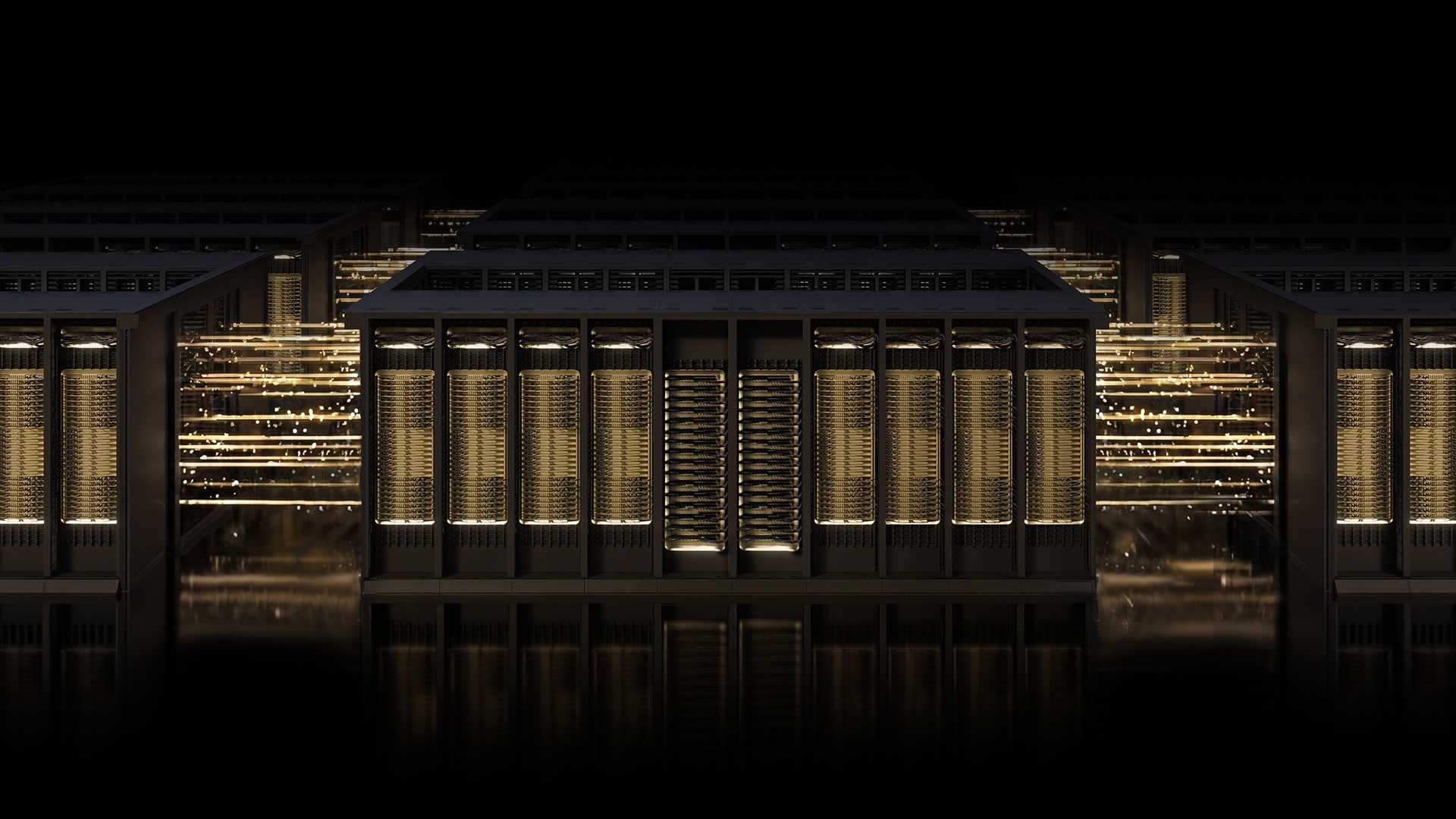

The race to construct the world’s strongest AI factories calls for networking that retains tempo with the ambitions of AI itself.

NVIDIA Spectrum-X Ethernet scale-out infrastructure stands at the forefront of that race as the most superior AI networking know-how obtainable right now, deployed by trade leaders who can’t afford to compromise on efficiency, resilience or scale.

That features OpenAI, Microsoft and Oracle.

Firms together with NVIDIA, Microsoft and OpenAI have demonstrated trade management by introducing Multipath Dependable Connection (MRC), an RDMA transport protocol. MRC allows a single RDMA connection to distribute site visitors throughout a number of community paths, bettering throughput, load balancing and availability for large-scale AI coaching materials.

Consider it as changing a single-lane street spanning a city with a cleverly laid-out road grid system paired with an on-the-fly site visitors app, enabling drivers to reroute round slowdowns and street closures.

“Deploying MRC in the Blackwell technology was very profitable and was made attainable by a robust collaboration with NVIDIA,” mentioned Sachin Katti, head of commercial compute at OpenAI. “MRC’s end-to-end method enabled us to keep away from a lot of the typical network-related slowdowns and interruptions and keep the effectivity of frontier coaching runs at scale.”

As well as, Microsoft and NVIDIA have a longstanding collaboration centered on advancing the infrastructure required for the subsequent technology of AI. Microsoft’s Fairwater and Oracle Cloud Infrastructure (OCI’s) Abilene knowledge heart, two of the largest AI factories purpose-constructed for coaching and deploying modern frontier LLMs, depend on MRC to ship on efficiency, scale and effectivity necessities. NVIDIA Spectrum-X Ethernet is suited for this atmosphere, serving to present the community basis wanted to run large-scale AI fashions and purposes with confidence.

Confirmed first in manufacturing with efficiency optimized on NVIDIA Spectrum-X Ethernet {hardware} and now launched as an open specification by means of the Open Compute Undertaking, MRC demonstrates the energy of the Spectrum-X Ethernet platform: purpose-built {hardware}, deep telemetry and clever cloth management working collectively to take a brand new protocol — a algorithm that controls how knowledge strikes between two methods throughout a community — from idea to gigascale AI manufacturing.

MRC delivers excessive ranges of GPU utilization by load-balancing site visitors throughout all obtainable paths, enabling each GPU to get the bandwidth it wants all through a coaching run. It sustains excessive bandwidth even below congestion by dynamically avoiding overloaded paths in actual time.

When knowledge loss happens, clever retransmission allows speedy, exact restoration, minimizing the influence of short-lived interruptions to long-running jobs, serving to keep away from GPU idle time.

Directors additionally achieve fine-grained visibility and management over site visitors paths, simplifying operations and accelerating troubleshooting at scale.

MRC, deployed on Spectrum-X Ethernet, is optimized and engineered for resilience at large scale. Its failure bypass know-how can — in simply microseconds — detect a community path failure and reroute site visitors mechanically in {hardware}.

This failure bypass know-how issues for AI coaching clusters the place hundreds of GPUs should keep synchronized, as even a short community disruption can gradual or interrupt a whole coaching job. Spectrum-X Ethernet prevents that by responding at {hardware} pace, conserving site visitors flowing alongside exact pathways throughout gigascale AI materials.

One other innovation key to reaching gigascale AI factories is multiplanar community designs, which OpenAI deploys with Spectrum-X Ethernet along side MRC. A multiplane community consists of a number of impartial community materials, or planes, with every offering an alternate communication path between GPUs.

The NVIDIA Spectrum-X Multiplane functionality enhances this community structure by supporting hardware-accelerated load balancing throughout the planes, boosting resiliency and scale with out sacrificing efficiency. This retains latencies predictably low whereas scaling to lots of of hundreds of GPUs.

With Spectrum-X Ethernet, clients are supplied with a alternative of RDMA transport fashions. Each Spectrum-X Ethernet Adaptive RDMA and MRC protocols, in addition to different customized protocols, run natively throughout NVIDIA ConnectX SuperNICs and Spectrum-X Ethernet switches and help multiplanar community designs at gigascale.

On this approach, the Spectrum-X Ethernet {hardware} and software program infrastructure that powers right now’s largest AI clusters offers clients the flexibility to decide on the proper transport for their workload.

The MRC transport protocol is the newest instance of how the trade is utilizing Spectrum-X Ethernet as a versatile, composable platform that integrates throughout the full breadth of contemporary AI infrastructure.

As AI factories proceed to scale, the community should do greater than transfer knowledge shortly. It should be clever, resilient and based mostly on open requirements. NVIDIA Spectrum-X Ethernet delivers on all three, and with MRC, it continues to set the customary for superior AI networking.

NVIDIA collaborated on MRC improvement with AMD, Broadcom, Intel, Microsoft and OpenAI.

Be taught extra about NVIDIA Spectrum-X Ethernet on the webpage, datasheet and technical whitepaper.

See discover relating to software program product data.

Source link

#NVIDIA #SpectrumX #Open #AINative #Ethernet #Fabric #Sets #Standard #Gigascale #MRC